The Case for Gen UI: Designing Interfaces That Think

Why the next era of user interfaces must be fluid, contextual, and AI-native.

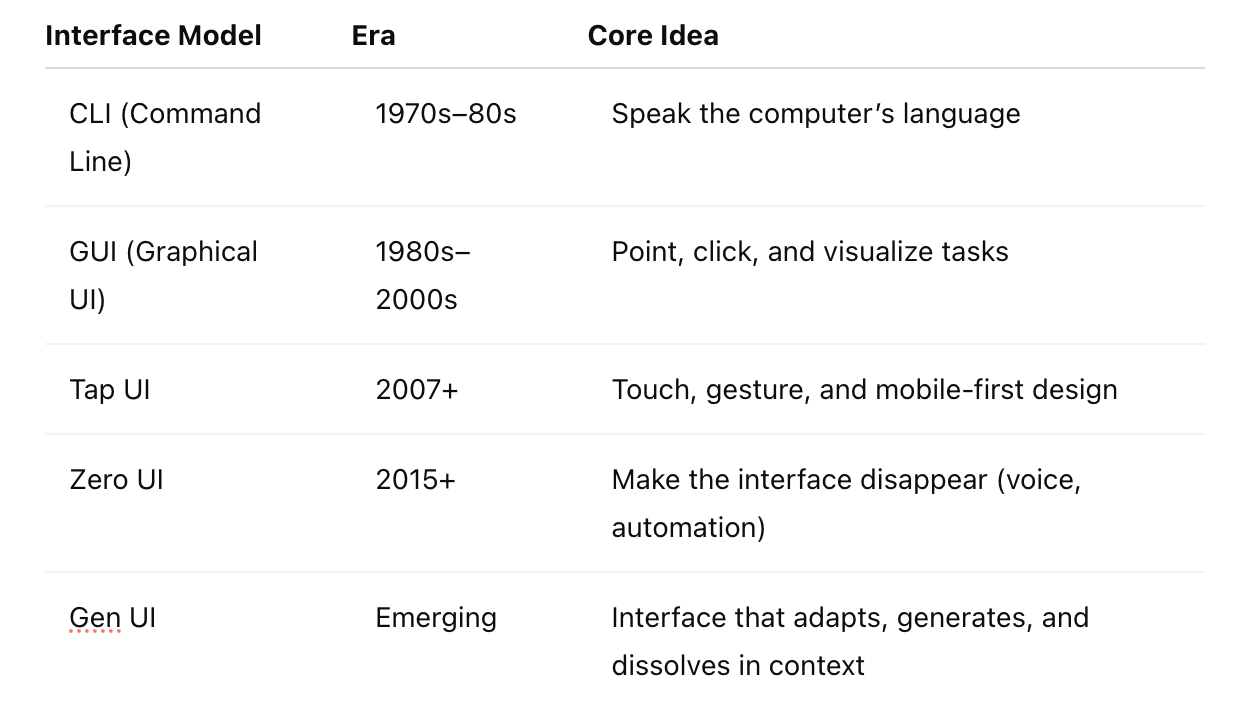

In the 1970s and 80s, interacting with computers meant typing commands at a blinking cursor. The Command Line Interface (CLI) was powerful—but cryptic. It required users to speak the computer’s language.

In the mid-1980s, the Graphical User Interface (GUI) changed everything. With the rise of the Macintosh and Windows, we moved from commands to icons, menus, and windows. Point-and-click became the universal language of computing—accessible, intuitive, visual.

By the late 2000s, we shifted again. The smartphone brought about the tap interface—touchscreens, swipes, pinches, voice input, and later, gestures. Interactions became more natural. Interfaces became more responsive. But the underlying structure—the screen, the layout, the form—remained mostly static.

Now, in the AI decade, existing interface models are starting to show their limits. The paradigms we've relied on—whether graphical, tap-based, or conversational—are struggling to keep up with systems that generate, infer, and adapt. And as interactions increasingly span human-to-machine, machine-to-machine, and machine-to-human use cases, the demands on interface design are fundamentally changing. A new kind of shift is becoming necessary.

Not just in how we interact—but in how software responds.

Why Today’s Interfaces Are Breaking

What if we’ve reached the edge of what today’s interfaces can do? What if the systems around us—now generative, autonomous, and context-aware—are outgrowing the UI paradigms we built decades ago?

What comes next isn’t obvious.

But imagine this: An interface that reshapes itself based on the user, the task, the moment. A system that doesn’t just wait for input—but senses, adjusts, and acts.

Enter Gen UI.

Gen UI is a proposal—a direction rather than a destination. It reflects the idea that interfaces, like the systems behind them, can become intelligent, contextual, and agentic. It doesn’t replace GUI, chat, or tap—it weaves them into something more fluid.

Interfaces That Think

Gen UI is an adaptive, ephemeral, context-aware interface model—generated dynamically in response to user intent and system state, and discarded once its purpose is fulfilled.

Gen UI is more than just a new interface—it’s a shift in mindset. It’s made possible by the underlying architecture of what we might call Infinite UI.

If Gen UI is the framing, Infinite UI is the engine beneath it. Gen UI is how we recognize the shift; Infinite UI is how that shift operates—ephemeral, adaptive, and generative at its core.

Imagine an interface that doesn’t exist until you need it—and disappears once it has served its purpose.

Like serverless computing, Infinite UI suggests a model where interaction surfaces are assembled dynamically in response to user intent, contextual signals, and system state. They are not fixed layouts or navigational trees—but momentary expressions of logic, rendered just-in-time and disposed of just as quickly.

No two sessions may be the same. No static form to return to.

This is not just about convenience—it’s about aligning interaction with the fluid, generative, and agentic nature of AI-native systems.

the clarity of command lines,

the usability of graphical interfaces,

the fluidity of modern input (tap, voice, gesture),

while introducing a new ingredient: adaptability.

It’s not just smarter—it’s an interface that responds. It listens. It reshapes. It becomes an active participant in helping users get to outcomes.

Hybrid Interfaces: From Chat to Classical

One expression of Gen UI is the hybrid interface. Imagine a user in a project management tool starting with a prompt: “Show me all delayed tasks for Q3 with budgets over $50K.”

The system responds conversationally—listing the relevant projects. But the moment the user says, “Sort by owner,” the interface morphs into a dynamic table, revealing filters, inline editing, and charting options.

A simple request to “Set a review meeting for all of them next week” triggers the interface to shift again, pulling in calendar availability and generating draft invites—all without needing to navigate separate screens.

Chat, GUI, and workflow co-exist. The interface flexes around user intent.

This is not about replacing form with conversation. It’s about moving fluidly between modes—depending on task, intent, and context.

Context Is the Engine

Without context, Gen UI doesn’t work.

It’s not personalization for its own sake—it’s situational awareness. For a UI to adapt, it must understand:

Who the user is

What they’re doing

Where they are in a workflow

What just happened

What’s likely next

Enterprise systems are rich in context—roles, histories, data trails, systems—but most interfaces ignore it. Gen UI calls on us to design UIs that use that context to shape the interaction in real time.

Interface Lineage

Gen UI builds on a rich lineage of interface paradigms:

Zero UI pushed us to minimize or even eliminate interfaces—favoring automation, voice, and ambient interactions. Its goal was to remove friction by making interfaces disappear.

Conversational UI introduced bots and assistants, placing natural language at the center of interaction.

Headless UI decoupled the front end from the backend, enabling flexible delivery across modalities.

Each of these challenged traditional assumptions about what a UI should look like and how users should interact with systems.

Gen UI takes the next step—bringing adaptability and real-time responsiveness into the interface itself. It doesn’t just remove or relocate the UI; it reshapes it in response to user context and system intelligence.

From Zero to Gen UI, we’ve moved from invisibility to fluidity—from minimizing UI to making it ephemeral and endlessly adaptive.

Looking Ahead: Voice, Space, and Beyond

Gen UI doesn't stop at screens.

Voice becomes a natural modality for intent capture—not standalone, but embedded in workflows.

Spatial interfaces (via AR or spatial computing) let users work with information in immersive, contextual environments.

These aren’t side experiments. They’re signs of the interface becoming more environmental—more embodied.

Why This Matters

In enterprise software, friction is expensive. Every extra click, every hidden field, every mental map a user must carry—adds up. According to a 2023 McKinsey report, knowledge workers spend an average of 19% of their time navigating internal systems. That’s nearly one day a week lost to UI friction.

As AI systems become more autonomous and decision-support workloads grow more complex, traditional UIs not only slow teams down—they constrain what users can accomplish.

Gen UI offers a chance to:

Reduce effort and cognitive load

Shorten time-to-action across workflows

Make software feel like a partner, not just a panel

For product leaders, this isn’t just an opportunity. It’s a coming shift in competitive advantage.

Is Gen UI Feasible?

Yes—but it's still taking shape. We’re not starting from zero.

No one knows exactly what Gen UI will look like in 3 or 5 years. We’re seeing fragments. Experiments. Prototypes. Yet, the concept becomes more feasible when grounded in the architecture of Infinite UI—interfaces that are ephemeral, generated on demand, and shaped entirely by context. This evolving idea is being shaped not only by emerging tools but also by shifting user expectations and architectural possibilities.

Emerging frameworks like SvelteKit and Solid.js offer reactive architectures that make real-time UI reshaping possible.

WebAssembly (Wasm) allows us to run high-performance logic in the browser—from AI inference to dynamic layout generation.

GraphQL, event streams, and edge APIs make contextual data more available at the point of interaction.

And increasingly, server-side rendering (SSR) is being reimagined—not just to speed up page loads, but to enable UI states to be generated on-demand based on context. In a Gen UI model, SSR isn't just about performance; it's about adaptability.

These aren’t silver bullets—but they signal that Gen UI isn’t science fiction. It’s already coming into view, one layer at a time.

Final Thoughts

Gen UI is not a product or a plugin. It may not even be a design philosophy—at least not yet.

It’s an emerging idea, shaped by the promise of generative systems and the limitations of today’s rigid interfaces.

It asks us to imagine software that:

Responds, not just renders

Adapts, not just displays

Collaborates, not just contains

We may not know exactly what it will become. But it’s worth exploring.

The future of UI is not more screens. It’s fewer assumptions.